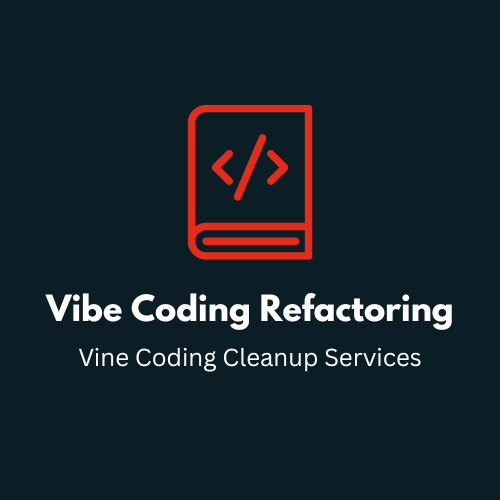

Many businesses now arrive with an AI proof of concept that was assembled quickly using copied prompts, direct model calls, loosely connected automations, and minimal system design. These experiments can be useful for learning, but they break under real usage. Outputs become inconsistent, errors are hard to trace, prompts live in random files, retrieval quality is unstable, latency spikes without warning, and nobody can explain why the system behaves the way it does.

This is where our dedicated AI developers add real value. We help correct vibe coding by auditing the current stack, separating what should be kept from what should be rebuilt, documenting implicit business logic, introducing evaluation datasets, replacing brittle prompt sprawl with intentional orchestration, and bringing deployment hygiene into the process. In practical terms, we turn an improvised AI experiment into software that a business can actually run, extend, and trust.

We also address governance concerns that often surface only after a prototype gains internal adoption: data access, prompt leakage, role permissions, monitoring, approval gates, and recovery paths when the model fails or the input context is poor. If your current AI product looks impressive in demos but fragile in operations, hiring a rescue-ready AI engineering team is usually faster than continuing to patch the system with more shortcuts.